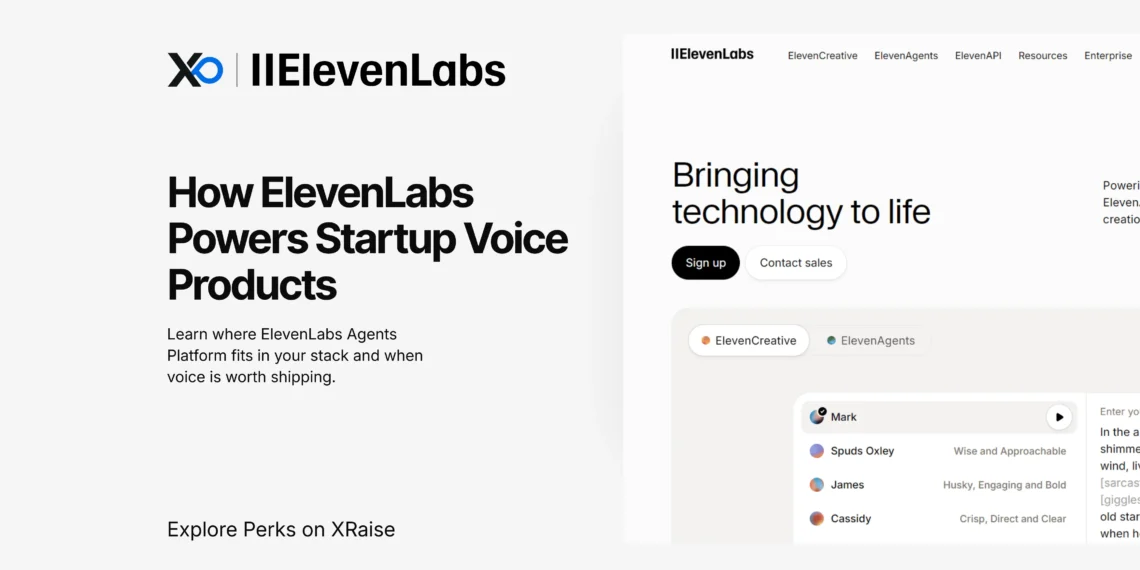

ElevenLabs Agents Platform helps startups build and run real-time voice agents across web, mobile, and phone without stitching the stack together. Use it when you need low-latency speech plus deploy + monitor workflows that survive real users.

TL;DR

ElevenLabs is an AI audio platform for text-to-speech, speech-to-text, dubbing, and voice agents; ElevenLabs Agents Platform is best when you’re building real conversations (web, mobile, or phone) and need low latency plus monitoring.

- What it is: A platform that combines TTS, STT, dubbing, and an agents layer you can configure and deploy.

- Why it matters: You can test voice UX in production-like conditions without building speech + telephony plumbing from scratch.

- What teams use it for: A first-line support agent that answers common calls and escalates edge cases.

- Next step: Confirm current models, limits, and plan terms in ElevenLabs’ official docs.

What is ElevenLabs?

ElevenLabs is an AI audio platform that provides text-to-speech, speech-to-text, dubbing, and an agents platform for building and operating real-time voice agents across web/mobile/telephony and APIs.

Who it’s for

- Founders and product teams shipping voice as a feature (support, sales, tutoring, accessibility)

- Engineers who need low-latency TTS and real-time transcription

- Teams localizing audio/video at scale with dubbing

What problem it solves

- Voice that feels “real-time” (latency, interruptions, turn-taking)

- One deployable agent across web, mobile, and phone instead of rebuilding three times

- A measurable iteration loop (tests, transcripts, analytics) rather than a demo

What it replaces

- Separate STT + TTS + telephony glue + custom interruption handling

- Manual re-recording for every language when dubbing can ship first-pass tracks

Why ElevenLabs matters at startup speed

Voice products fail in the iteration cycle: scripts change, names break, latency feels off, and “works in staging” fails on real calls. The platform value is compressing that cycle with low-latency speech, deployable surfaces, and operational controls.

| Startup loop | Without a platform | With ElevenLabs |

|---|---|---|

| Ship a voice MVP | Assemble vendors + fight edge cases | Configure, deploy, iterate |

| Handle interruptions | Custom logic and timing bugs | Built-in turn-taking layer |

| Go multilingual | Re-recording + heavy ops | Dubbing + multilingual speech |

| Survive spikes | Silent drops at concurrency limits | Plan limits + optional burst (cost tradeoff) |

Takeaway: you’re buying time, fewer weeks lost to “voice plumbing” before you can learn from users.

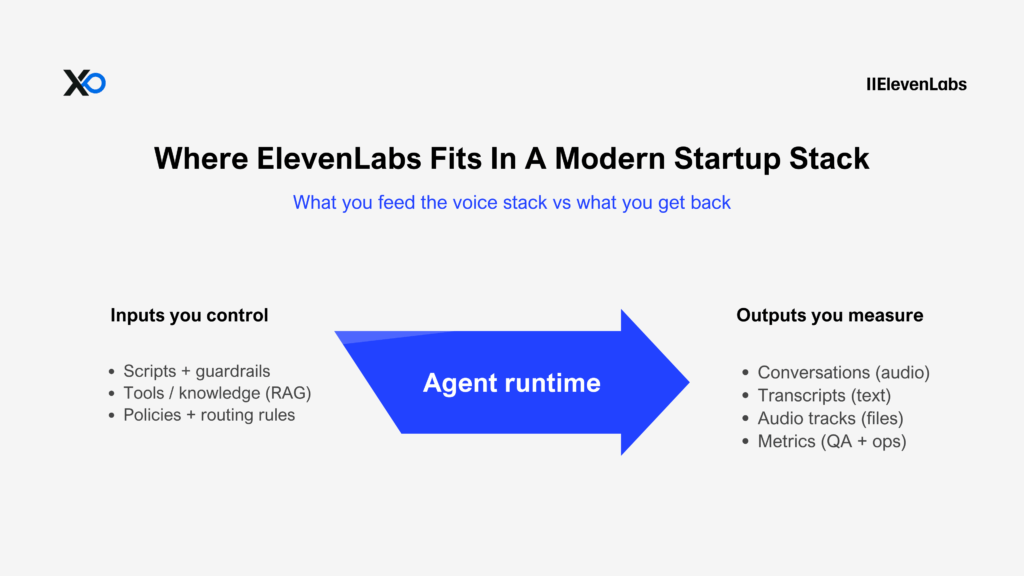

Where ElevenLabs fits in a modern startup stack

Case 1: Support voice agent (phone + web).

You define narrow intents (order status, reschedule, basic troubleshooting) and keep a human escalation path. ElevenLabs handles STT/TTS, turn-taking, and deployment to widget or phone.

Case 2: Voice-first learning.

In practice, you pair real-time STT (Scribe v2 Realtime) with low-latency TTS inside an agent loop; then, you iterate using transcripts and measurable outcomes.

ElevenLabs Agents Platform inputs → outputs

Key features of ElevenLabs Agents Platform

Flash v2.5 TTS (speed-first voice)

What it enables: Real-time text-to-speech where latency is the product (ElevenLabs docs cite ~75ms excluding app/network latency).

Founder benefit: Your agent stops feeling “laggy,” which is usually the make-or-break point for call completion and user trust.

Scribe v2 Realtime (live transcription)

What it enables: Low-latency speech-to-text designed for agent-style turn-taking and live routing (docs cite ~150ms excluding app/network).

Founder benefit: You can debug from transcripts, measure failure modes, and improve the agent based on real conversations.

Agents architecture (STT + your choice of LLM + TTS + turn-taking)

What it enables: A documented, workflow-oriented agent system that’s more than “TTS + an LLM,” including turn-taking and operational tooling.

Founder benefit: Fewer moving parts to maintain, fewer edge-case bugs, and a shorter path from prototype to a real surface.

Deployments (web + telephony paths)

What it enables: Shipping the same agent to real channels, especially phone, without rewriting your entire stack. (Docs reference Twilio integration, SIP connectivity, widget, and SDK paths.)

Founder benefit: You test voice where it matters: real users, real calls, real constraints.

Dubbing (localization without re-recording)

What it enables: Dubbing that translates audio/video while preserving speaker characteristics, with documented UI/API workflows and file/time limits.

Founder benefit: As a result, you can validate multilingual demand early, before committing to expensive re-recording and full localization operations.

If you’re building the ElevenLabs Agents Platform into a product, start with these levers first—because they change latency, reliability, and iteration speed the fastest.

How ElevenLabs Agents Platform works (explained simply)

ElevenLabs Agents Platform deployment surfaces

A production voice agent is four components: speech recognition, a language model, speech synthesis, and turn-taking. ElevenLabs documents coordinating STT (ASR), your choice of LLM, low-latency TTS, and a turn-taking model.

Practical flow:

- Pick a surface (widget/mobile/phone).

- Configure the agent (prompt, tools, knowledge).

- Test + monitor, then iterate with transcripts/analytics.

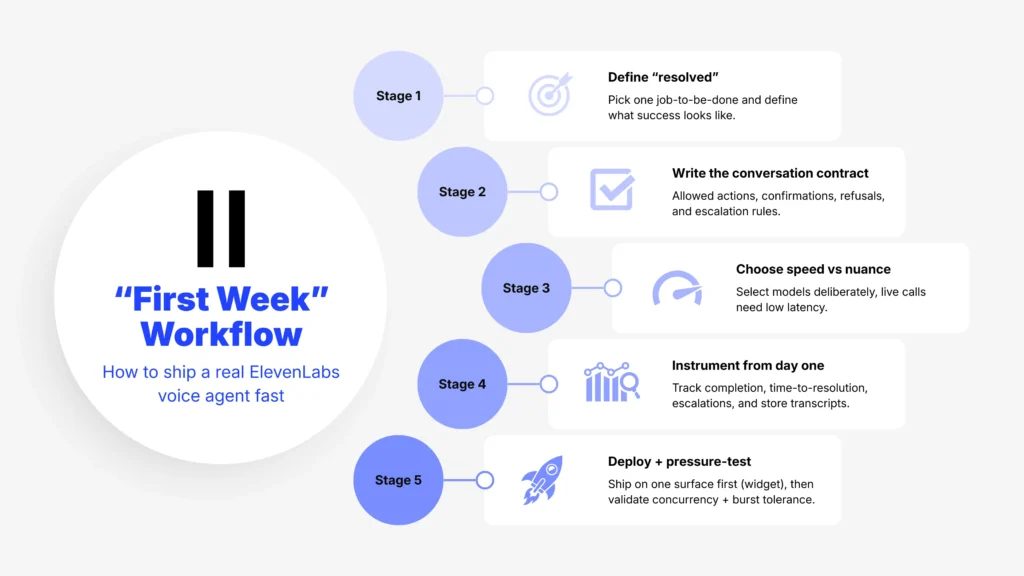

A practical first week on ElevenLabs Agents Platform

- Pick one job-to-be-done and define “resolved.”

- Write the conversation contract: allowed actions, confirmations, refusal rules, escalation.

- Choose speed vs nuance deliberately (live conversations want low latency).

- Instrument from day one: completion rate, time-to-resolution, escalation rate, “no match” intents; store transcripts.

- Deploy on one surface first (widget is usually fastest), then expand to telephony.

- Validate concurrency limits and decide on burst pricing tolerance.

Real experiences from founders and teams

Allô (telephony)

Allô (telephony) reports moving from idea to production in one day and higher caller engagement.

Klarna (support)

Klarna reports a first-line phone agent and “up to 10× faster” resolutions for queries handled by the agent.

The team launched a voice AI assistant built with ElevenAgents as the first line of phone support in the United States.

Today, the assistant is available to Klarna’s 35 million US customers and is delivering resolutions up to 10× faster for eligible queries. Read their whole story on ElevenLabs’s official website.

Revolut (fintech support)

Revolut reports “8×” time-to-resolution, 99.7% call success, and availability in 31+ languages. In practice, ElevenLabs Agents solved tickets in under 5 minutes, while tickets handled by the prior setup took 8× longer or more (including queues, and not accounting for issue complexity). Meanwhile, more than 99.7% of agent-handled calls completed successfully. As a result, these improvements can compound at scale when voice becomes a reliable channel rather than a pilot. Read more here.

| Field | Result |

| Time to resolution | 8x lower |

| Call success rate | 99.7% |

| Language availability | Live in 31+ languages |

MasterClass (learning) reports over 75% of users interact via voice.

MasterClass reports over 75% of users interact via voice.

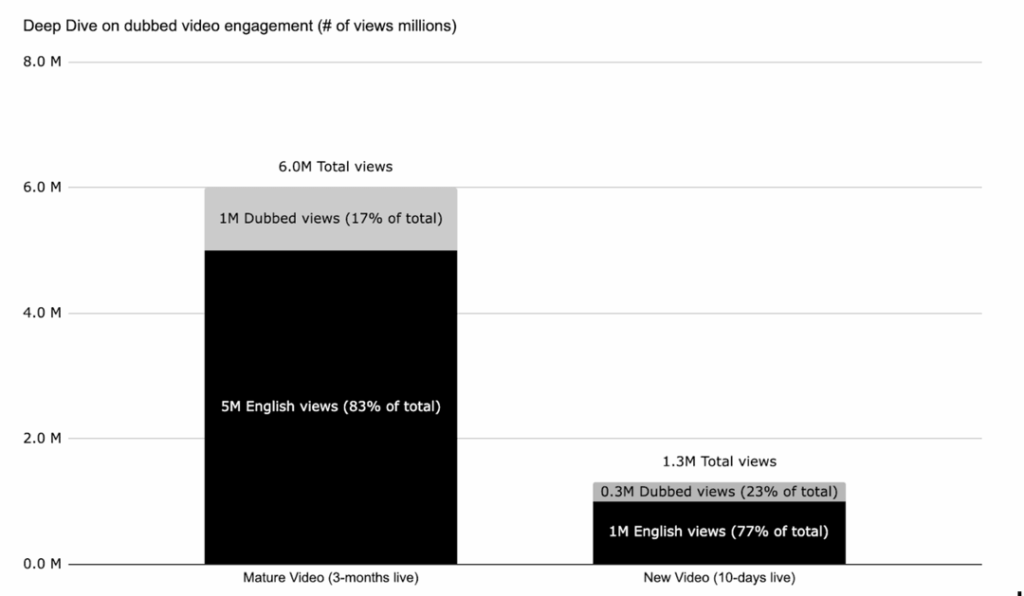

Drew Binsky (localization)

Drew Binsky reports up to 1 million extra views from non-English regions on dubbed videos. In June 2024, ElevenLabs worked with Drew Binsky to explore YiuTube’s new feature called “Multi-Language Audio (MLA)”, Using their Dubbing Studio, Drew released videos in Spanish, Portuguese, Arabic, German, and Italian.

The results were clear: dubbed videos drew in up to 1 million extra views from non-English-speaking regions for mature videos (17% of total views) and already make up a significant share of views for newer videos with 300k views (23% of total views) of a total 1.3M views to date. Read their whole story here.

Ally Solutions

Ally Solutions used ElevenAgents to scale support and revenue for Onsite Consulting, a Denver-based computer repair and IT company. By using ElevenAgents, Ally Solutions helped Onsite Consulting transform its phone operations. Read more here.

| Metric | Result |

| Inbound calls handled autonomously | 92% handled end-to-end |

| Revenue from incremental upsells | ~$2,000 per month |

How ElevenLabs helps startups grow (the mechanisms)

Faster prototyping matters because voice is expensive to “get wrong.” However, the Agents quickstart positions a first agent in minutes; therefore, the cost of discovering whether voice belongs in your product drops significantly.

For example, a first-line support agent can reduce queues by handling repetitive informational calls and escalating sensitive cases. Meanwhile, dubbing and multilingual speech models let you test global expansion before you commit to re-recording and heavy localization ops.

ElevenLabs Agents Platform pricing, plans, and what to watch for

Budget rule: credits + concurrency

ElevenLabs uses credits, with published tiers and documented rules (credits reset each cycle; unused credits can roll over under conditions; billed per generation request).

| Plan | Price (monthly) | Credits / month | Best for who |

|---|---|---|---|

| Free | $0 | 10k | First experiments |

| Starter | $5 | 30k | Early prototypes |

| Creator | $22 (first month shown as $11) | 100k | Higher-quality builds |

| Pro | $99 | 500k | Product embedding via API |

| Scale | $330 | 2M | Team workflows (3 seats) |

| Business | $1,320 | 11M | Higher-volume usage |

| Startup Grants | 12 months free | 33M characters | Eligible startups building real products |

Offer on XRaise: ElevenLabs Agents Platform

ElevenLabs for Startups is available on XRaise through the Startup Grants program. As a result, eligible teams get free access for a fixed period so they can test real voice features in a real product—before paying retail while they’re still validating. In other words, it’s a structured window to prove voice ROI before you commit to ongoing spend.

What you get

- 12 months free access via the Startup Grants program

- 33,000,000 characters included, valid for 12 months

- Scale-level benefits, including higher concurrency limits and improved support

- Access to core platform capabilities used to build voice agents and AI audio experiences

Why it matters for startups

- Lowers the cost barrier to shipping and learning from production usage (not just demos)

- Supports real-time use cases with low-latency model options (Flash v2.5 is documented as ~75ms excluding app/network latency)

- Helps teams iterate on multilingual voice experiences without hiring voice talent or running heavy audio production

Use cases / best for

- Teams building customer support or sales voice agents across web, mobile, or phone

- Products that need text-to-speech for narration, voice UI, or accessibility

- Startups localizing content with dubbing and multi-language workflows

- Developer teams integrating audio via APIs and SDKs

How to apply

Use this XRaise apply guide for the claim flow.

Limitations, risks, and when not to use it

When ElevenLabs Agents Platform is not a fit

- Prohibited uses include unauthorized/deceptive impersonation and other harmful uses.

- Burst pricing can raise costs during spikes (2× rate for excess calls).

- Concurrency limits vary by plan (Agents and TTS have different limits).

- Model and per-request limits exist (e.g., Flash v2.5 vs v3 limits).

- Dubbing has documented file size/duration limits (UI vs API).

- For regulated deployments, confirm what controls you can access at your tier (data residency/zero retention are documented, but tier access varies).

ElevenLabs vs alternatives (when they’re better)

| Alternative | Choose it when… | Choose ElevenLabs when… |

|---|---|---|

| AWS Polly | You want AWS-native TTS primitives | You want agents + audio + deploy paths |

| Google Cloud TTS | You’re committed to Google Cloud speech | You want end-to-end voice workflows |

| OpenAI Audio API (TTS) | You want LLM-coupled TTS | You need telephony + monitoring + full stack |

| Deepgram | STT is the primary need | You need both STT + TTS in an agent loop |

ElevenLabs Agents Platform FAQs

What is ElevenLabs Agents Platform used for?

Text-to-speech, speech-to-text, dubbing, and real-time voice agents across web/mobile/telephony.

Is ElevenLabs Agents Platform good for startups?

Yes when voice is a real product surface and you can manage governance; not ideal for one-off projects or teams that can’t handle consent and escalation.

How fast can a small team get value?

In practice, a small team can get value within a week, provided you ship one narrow workflow, deploy on one surface, and then iterate using transcripts and outcomes.

Does it integrate with Twilio?

Yes, ElevenLabs documents a native Twilio integration for inbound/outbound calls.

How much does ElevenLabs Agents Platform cost?

From $0 to paid tiers; credit-based, charged per generation request with reset/rollover rules.

What are the main limitations?

Concurrency/plan limits, spike-cost tradeoffs with burst pricing, and governance needs for voice use cases.

What’s the best alternative?

Pick based on your primary need: STT-first providers for transcription-heavy products, or cloud-native TTS if you just need speech primitives.

Where can I confirm the terms?

ElevenLabs’ official pricing, docs, and policies are the source of truth.

Final Takeaway

If you’re building voice into the product (support, sales, tutoring, accessibility), ElevenLabs is strongest when you care about real-time latency, deployable surfaces, and an agent workflow you can measure and improve. If your use case is one-off content or you need perfectly predictable costs under spikes, keep it simpler or choose a more narrow provider. Confirm current models, limits, and policy constraints in the official docs/terms. To explore more startup tools and offers, browse the XRaise perk directory.